Communication Loop in Testing

Communication

A general definition of communication is a process of information being exchanged through a common system of symbols, signs, or behaviors.

From the moment we get up in the morning until we go to bed, we communicate in different ways. In addition to all meetings in which analog communication plays a central role, there is also a lot of digital communication, both in business and our personal lives.

In addition, we have specific communication within business projects on various levels. The control group, or program management, wants to communicate in outlines, while the sub-levels need detailed and substantive communication. An example in which this occurs is in the development of software, testing, training, management, and more.

Within this blog, I would like to talk about the detailed and substantive communication within the project section “testing”—the communication loop in testing!

Complexity

At TestMonitor, we have more than 22 years of experience in complex test projects, and we developed the software and method based on that experience. We believe that insight into quality is the key to successful IT. With TestMonitor, you get insight into the quality of software, and you can easily test software yourself, with direct input from end users. That is why we focus on two questions: Does it work? And can you work with it?

In our philosophy, every user is a tester, and if you give them the right methodology, you will be amazed at how much quality they can easily map out for you. Whether that user is the CEO, a member of the service department, or a professional tester, everyone can test with the right structure and tools.

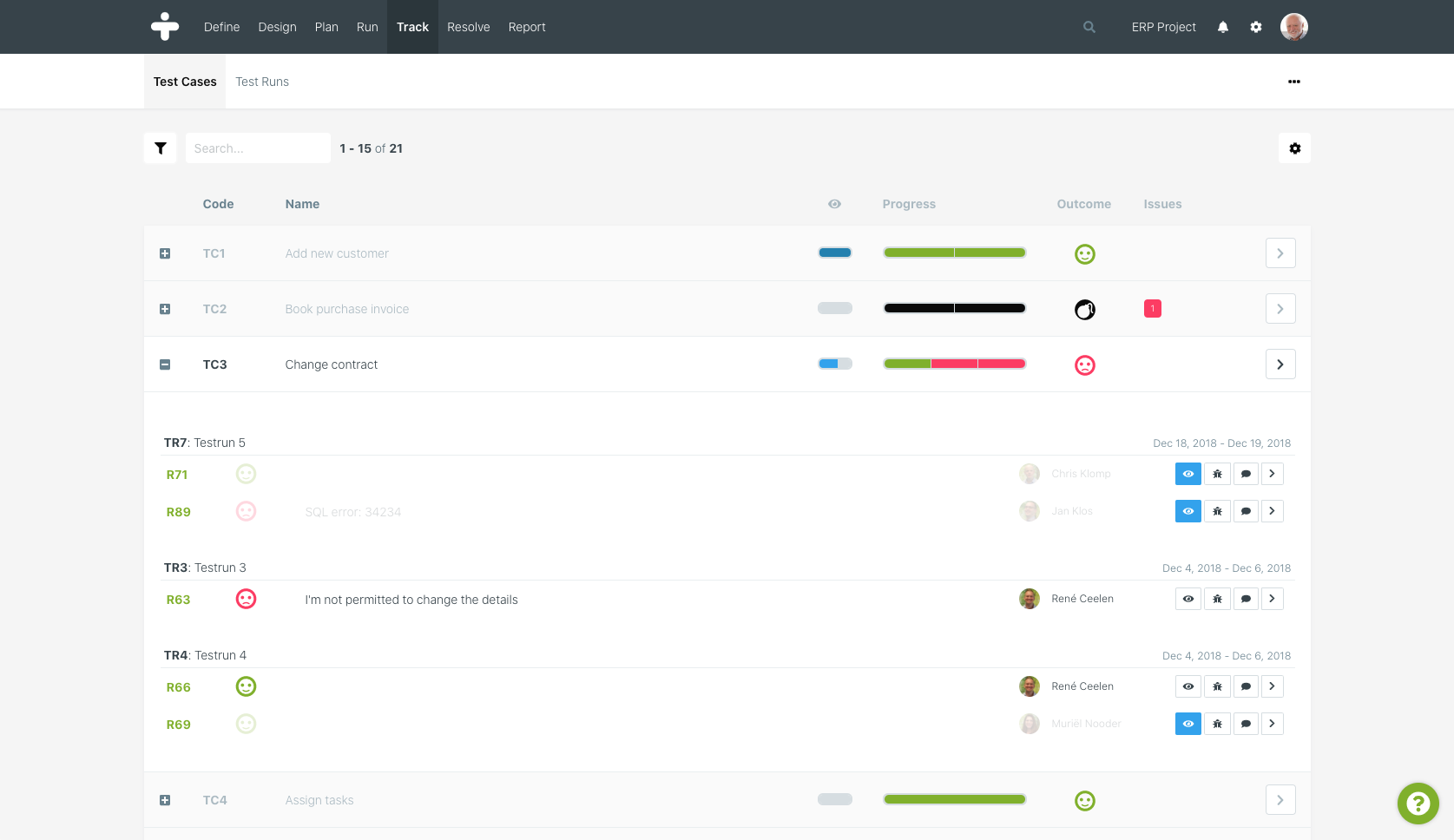

We have developed smart filter options and specific functionalities within TestMonitor to properly manage and analyze the multitude of test results. If five people each test 10 cases, you will have 50 test results upon completion. You will then analyze these results to create “issues,” which are related to specific test results. The image below shows that the multitude of test results will eventually lead to issues.

Each issue is linked to an owner, someone who ensures that the issue is actually resolved. When the issues are resolved, the linked test cases or test runs will be retested to determine that the issue has indeed been resolved. It’s a simple and straightforward quality cycle.

To prevent issue lists from "wandering" somewhere, which will eventually need to be developed or implemented right before a deadline, our maxim is: if the issues are not in TestMonitor, they will not be considered. In short: the truth can always be found in TestMonitor! The advantage of such an approach is that all issues are analyzed and monitored from one central location. This allows us to manage simple test runs with a few testers in TestMonitor, but also very complex test runs with 500 users in different countries.

Previous research has shown that within an average ERP test process, between 500-700 issues need to be dealt with, generally by multiple stakeholders, including: the organization, the implementation partner, and the consultants. These issues were not created by 500 test results, but a multitude thereof. From TestMonitor, we have determined that, on average, this is a factor of eight. So in a project in which 500 issues have been created, 4,000 test results have been registered by different testers. In these 4,000 results, all the test results are mixed up: the successful and unsuccessful.

In summary, all test designs, test results, and issues are in TestMonitor, where they can be managed and analyzed. However, we found that a lot was still being communicated about certain results and issues outside of TestMonitor. This communication was either verbal or by email. Imagine that for those 500 issues, different emails are sent about the status, impact, or uncertainties from the owner. You would quickly end up with a mess of email communication that is out of control.

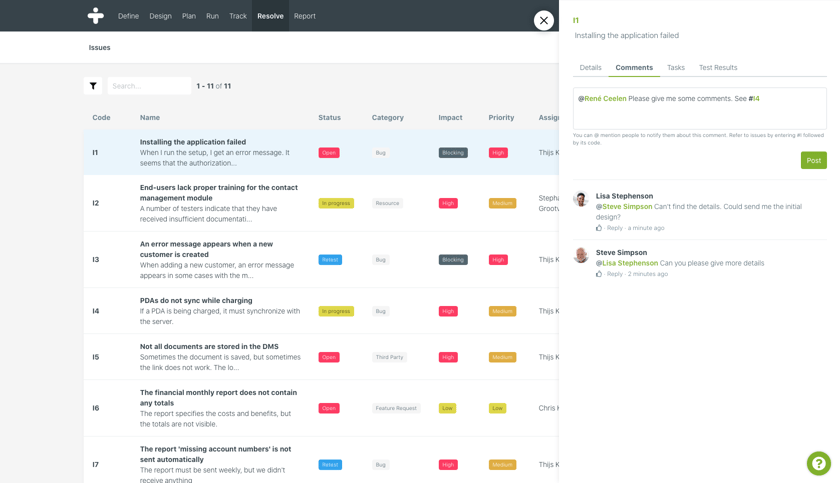

To solve this problem, we have built a complete communication platform in TestMonitor, with the possibility to post comments to issues, as well as test results. With the notification functionality, all parties involved are informed.

Comments on issues

We researched a project to find out how powerful the communication platform within TestMonitor is. A total of 790 issues were registered within this project. Of these issues, a total of 6,150 comments were made by 73 different people. Imagine how much email chaos this would create and how much inefficiency there would be in terms of communication and productivity—not to mention being able to hold on to and read all this information on an issue. A special detail is that for one specific blocking issue, more than 100 comments have been posted.

Anyone with the necessary permissions can comment on any issue, but you can do so much more:

- Responses to a comment

Click on the answer button, and your comment will be embedded in the parent comment. This immediately creates a conversation structure.

- Thumbs up

You may not feel the need to respond, but you do agree with the comment that was made. A simple "thumb up" ensures that the person who posted the comment has an idea of how many people thought it was a relevant comment.

- @ mention

With the @ mention, you can immediately notify people in the project. The person will then automatically receive a notification, which will draw their attention to this fact.

- # link

With the # link, you can link existing issues in the project in your comment. How often does it happen in a project that issue I1 and issue I63 are virtually identical? With a # link and @ mention, you can directly notify the owner of the issue(s). In the comment, you can also connect to # link between these issues.

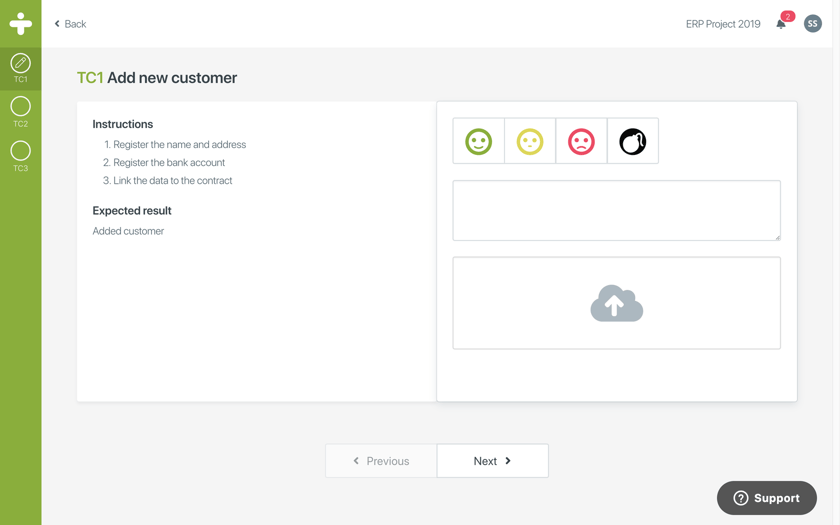

Comments on test results

As a test manager, how often do you end up in a situation in which two testers with the same background perform the same test, yet have completely different test results? Of course that doesn't automatically mean someone or something is wrong, but sometimes you want a tester to reconsider his test result, especially if you can offer a little bit of extra context to this.

Without a communication platform, an email would be sent out asking whether the test result could be re-evaluated. That email would probably end up on the large heap of emails, likely resulting in the tester having different priorities and the test manager being left with an "open end." One "open end" is still manageable, but a large number of them would become very confusing and complex.

Again, we have built the functionality of the communication loop. It is now possible in TestMonitor, when analyzing the test results, to quickly add a comment that will be delivered directly to the people involved. And with the @ mention functionality, you can even connect multiple people to the "discussion.”

The tester receives a notification in TestMonitor and in Slack or email, for example, depending on your personal preferences.

In combination with the "mark as read" functionality, you have control over the "open ends" by marking only those test results that have been fully completed. If you also set up a filter, a filter in which only the unread test results are shown, you now have a real grip on your quality cycle.

Start testing with TestMonitor

If you need assistance or if you have any questions regarding TestMonitor, feel free to contact us at any time. We are here to help.